By Joseph Lord

The only member of Congress with an advanced degree in AI is urging caution as other lawmakers and industry leaders rush to regulate the technology.

Rep. Jay Obernolte (R-Calif.) is one of only four computer programmers in Congress, and the only one with a doctorate in artificial intelligence—and he says the rush to regulate is misguided. Obernolte said that his larger concerns about AI center around the potentially “Orwellian” uses of the technology by the state.

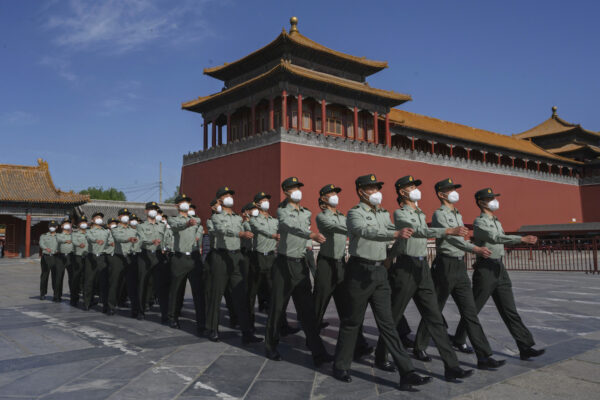

Recently, a coalition of technology leaders like Twitter owner Elon Musk and Apple CEO Tim Cook, among others, called for a total shutdown of AI research and development. This followed the release of ChatGPT 4, an extremely powerful artificial intelligence chatbot that has, among other milestones, completed tests like the bar exam in the 90th percentile and passed the SAT.

The release of ChatGPT 4, easily the most powerful consumer AI on the market, prompted fears that AI was getting much more intelligent much more quickly than expected. In their letter, tech leaders called for a six-month shutdown of new AI development and called on Congress to regulate the technology.

Obernolte sat down with The Epoch Times to discuss AI, saying that regulation is ill-advised and based on a fundamental misunderstanding of AI technology.

“I’m not standing up and saying we shouldn’t regulate,” Obernolte emphasized. “I think that regulation will ultimately be necessary.”

But he said that lawmakers need to ensure that they “understand what the dangers are that we’re trying to protect consumers against.”

“If we don’t have that understanding, then it’s impossible for us to create a regulatory framework that will guard against those dangers, which is the whole point of regulating in the first place,” Obernolte said. “Right now, it’s very clear that we do not have a good understanding of what the dangers are.”

Others in Congress have called for prompt action on the issue.

“This is something that is going to sneak up on us, and we’ll get to the point where we’re in too deep to really make meaningful changes before it’s too late,” Rep. Lance Gooden (R-Texas) told Fox News.

He and others from both parties have raised concerns over the potential for AI to take over jobs previously done by humans. Others worry about the so-called “Singularity,” a predicted moment in AI development where AI will supersede human intelligence and capabilities.

AI Not Likely to Take Over the World

Obernolte said that the letter from tech leaders “is helpful in calling attention to the emergence of AI and the impacts it’s going to have on our society.” But he observed that for laymen, the greatest fears around AI are like those displayed in the Terminator movies, a series about AI taking control of human computer networks and destroying the world in a nuclear apocalypse.

“The layman probably thinks that the largest danger in AI is an army of evil robots rising up to take over the world,” he said. “And that’s not what keeps thinkers in AI up at night. It certainly doesn’t keep me up at night,” Obernolte said.

Before Congress can even consider regulating, Obernolte said, it needs to “define danger” in the context of AI. “What are we afraid might happen? We need to answer that question to answer the question [of how and when to regulate].”

One big fear that Obernolte cited is the development of “emergent capabilities” in AI. This describes a situation where an AI is able to do something it was not initially programmed to do. But Obernolte said this isn’t as big of an issue as some say, as it follows similar trends observed in primate brains.

“One of the things that [AI critics are] alarmed about is what they call emergent capabilities,” Obernolte explained. “So the ability of an algorithm to do something that you didn’t train it to do, you didn’t even think that it would be able to do, and all of a sudden, it’s able to do it.

“That’s very frightening and alarming to people,” he continued. “But if you think about it, it shouldn’t be that alarming, because these are neural nets. Our brain is a neural net. And that’s the way our brain works. You know, if you look at it, primate brain sizes, you know, as you grow the brain size, all of a sudden things like language begin to emerge … and we’re discovering the same things about AI. So I don’t find that alarming.”

Obernolte said the real function of ChatGPT 4 only bolsters his position.

“If you look closely at ChatGPT 4, it reinforces the veracity of what I’m saying,” Obernolte said. “AI is a tremendously powerful and efficient pattern recognizer.

“ChatGPT 4 is designed to take in this enormous amount of language, images, and prose in order to synthesize answers to questions,” he said. “If you think about what what has alarmed [AI critics], in the context of all of the data it’s been trained to recognize patterns in, it becomes a lot less alarming.”

AI Can’t Think or Reason

Another important aspect of AI that Obernolte pointed to is its inability to pass the Turing test or reason independently.

This is important because if AI cannot reason like a human being and act independently, it poses little risk to humans. Almost all fears about AI involve AI becoming independent from humanity and working against the interests of humanity.

But so far, Obernolte noted, the technology can’t even reliably mimic a human being.

Proposed by World War II British codebreaker Alan Turing, the Turing test describes a machine’s resemblance to a human being. For an AI to “pass” the Turing test, human beings speaking to it via chatbox should not be able to tell that they are speaking to an AI. The test was proposed as a measure of the refinement of AI technology.

Many see the Turing test as the gold standard for measuring AI intelligence. Thus far, no AI is able to pass the Turing test.

Obernolte opined that even if in the future an AI could pass the Turing test, that would not necessarily mean it was a “thinking, reasoning entity.”

It is a matter of philosophical debate whether AI could ever have motives or carry out independent actions in the same sense as human beings. And for at least the foreseeable future, Obernolte said, there’s no reason to worry.

“Certainly ChatGPT 4 cannot pass the Turing test,” Obernolte said. “It may be the case that ChatGPT 6 or 7 can pass the Turing test. It might be that it can. You could sit for an hour, talking back and forth, and not be able to determine whether or not it’s a person or a computer—that still will not mean that we have created a thinking, reasoning entity.”

Regulating Would Empower US Foes

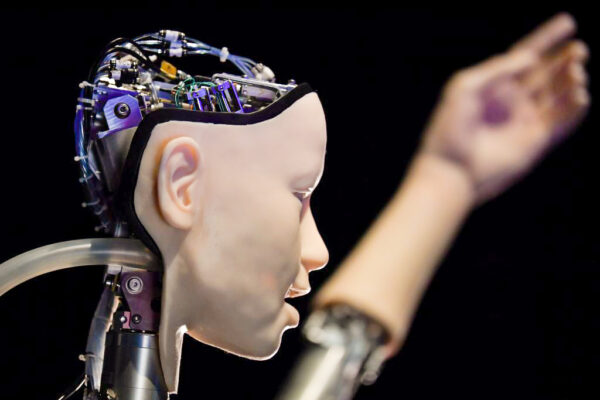

Obernolte said that shutting down U.S. research into AI technology would only serve to empower American enemies.

“In the most draconian case, let’s say that tomorrow I introduced a bill that required everyone in the United States of America to stop development of AI that was anything beyond the capabilities of GPT 4,” Obernolte said. “But we would still have bad actors in the United States who saw financial gain in continuing development of advanced AI that would continue to do it and flout the law. We would still have foreign adversaries using it.”

Thus, Obernolte said, AI development would still occur—it would just occur among black marketeers and U.S. adversaries.

“It’s undeniable that we would put our country at greater risk of attack from advanced AI if we stopped our development of it,” he said. “Because when we resume it, our AI is not going to be as advanced as those of the people that didn’t stop. So it’s just not realistic to say, ‘Everyone stop what you’re doing.’

“Let’s talk about this,” he added. “I’m glad that we’re talking about it.”

‘Orwellian’ Uses

Obernolte said he’s not afraid of AI becoming independent and destroying humanity, but he is concerned about the “Orwellian” uses the technology could have.

“I do worry about some other very real dangers that, in their own way, are just as consequential and hazardous as robots taking over the world, but in different ways,” Obernolte said.

For one, he cited AI’s “uncanny ability to pierce through personal digital privacy.”

The result, he said, could be to help government or corporate entities predict and control behavior.

Obernolte noted how AI could put formerly disaggregated data together “and use it to form behavioral models that make eerily accurate predictions about future human behavior. And, by the way, give people clues on how to influence that future human behavior.”

“It’s already being done,” he added, pointing out the social media companies whose whole business model revolves around the collection and sale of personal data.

On a corporate level, Obernolte said this could mean a few major players form a “monopoly” over data with effectively insurmountable barriers to entry.

But the effects could be far worse if a state got hold of the technology, he predicted.

“I worry about the way that AI can empower a nation state to create, essentially a surveillance state, which is what China is doing with it,” Obernolte said. “They’ve created essentially the world’s largest surveillance state. They use that information to make predictive scores of people’s loyalty to the government. And they use that as loyalty scores to award privileges. That’s pretty Orwellian.

“So this is a disruptive way that government can use it,” he added. “And as we have learned to our misfortune in the history of our country, we need to put guardrails around government as well as industry.”